Dear all,

First congratulations for this really good piece of software which I've been using for years.

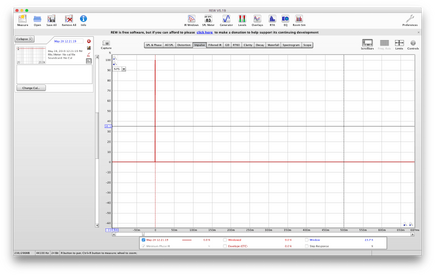

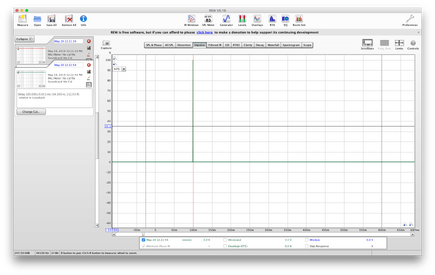

I'm facing a simple problem by measuring impulse responses for which I still did not find a consistent solution (despite my previous post): if "no timing reference" is selected, the impulse response seems to be automatically time-shifted so that the peak is at t=0, and thus whatever the value of the setting "set t=0 at IR peak" is. This is also the case while importing sweep recordings, and thus even if the option "For imports set t=0 at first sample" is selected.

I observed this problem with both last official version (5.19) and beta version (5.20 beta 10).

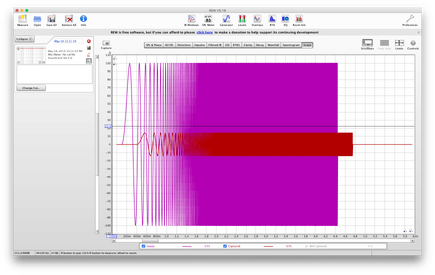

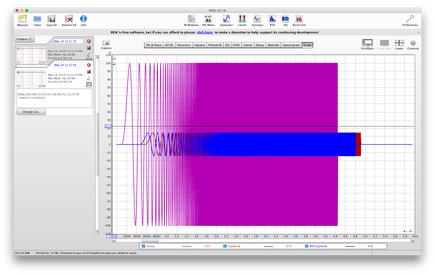

On a different level, I also sometimes encounter problems with using loopback as a timing reference: typically, pre-ringing sometimes appears on the estimated IR. Therefore I assume that the estimated transfer function is divided by the transfer function of the loopback channel (or maybe only its allpass component), and that artefacts appear during the spectral division. Am I right? If yes, wouldn't it be possible, as an alternative method, to correct the estimated transfer function with a pure linear phase shift (i.e. a pure delay), that could for instance be estimated thanks to correlation on the loopback channel?

Best regards,

Alexis Baskind

First congratulations for this really good piece of software which I've been using for years.

I'm facing a simple problem by measuring impulse responses for which I still did not find a consistent solution (despite my previous post): if "no timing reference" is selected, the impulse response seems to be automatically time-shifted so that the peak is at t=0, and thus whatever the value of the setting "set t=0 at IR peak" is. This is also the case while importing sweep recordings, and thus even if the option "For imports set t=0 at first sample" is selected.

I observed this problem with both last official version (5.19) and beta version (5.20 beta 10).

On a different level, I also sometimes encounter problems with using loopback as a timing reference: typically, pre-ringing sometimes appears on the estimated IR. Therefore I assume that the estimated transfer function is divided by the transfer function of the loopback channel (or maybe only its allpass component), and that artefacts appear during the spectral division. Am I right? If yes, wouldn't it be possible, as an alternative method, to correct the estimated transfer function with a pure linear phase shift (i.e. a pure delay), that could for instance be estimated thanks to correlation on the loopback channel?

Best regards,

Alexis Baskind